| [1] |

王丽艳. “双一流”高校图书馆科研评价服务研究[D]. 合肥: 安徽大学, 2021.

|

|

Wang Liyan. Study on Research Evaluation Service of "Double First-Rate" University Library[D]. Hefei: Anhui University, 2021.

|

| [2] |

李津, 赵呈刚. 情报分析服务支撑高校“双一流”建设的实践与思考[J]. 图书情报工作, 2018, 62(24): 18-26.

|

|

Li Jin, Zhao Chenggang. Practice and consideration of information analysis service supporting the construction of "double-first-class" in colleges and universities[J]. Library and Information Service, 2018, 62(24): 18-26.

|

| [3] |

吴爱芝. 高校图书馆学科战略情报服务探索性研究[J]. 大学图书馆学报, 2023, 41(5): 18-25.

|

|

Wu Aizhi. Exploration study on disciplinary strategic intelligence service of university libraries[J]. Journal of Academic Libraries, 2023, 41(5): 18-25.

|

| [4] |

杨眉, 潘卫, 董珏, 等. 一流学科建设视角下的情报实证研究与服务策略探析[J]. 图书情报工作, 2022, 66(5): 72-79.

|

|

Yang Mei, Pan Wei, Dong Jue, et al. The intelligence empirical research and service strategy analysis from the perspective of first-class discipline construction[J]. Library and Information Service, 2022, 66(5): 72-79.

|

| [5] |

潘卫, 杨眉, 董珏. 支撑高校管理与决策的产品化情报服务[J]. 大学图书馆学报, 2016, 34(6): 43-50.

|

|

Pan Wei, Yang Mei, Dong Jue. Product oriented intelligence service to support the scientific research management and decision making in universities[J]. Journal of Academic Libraries, 2016, 34(6): 43-50.

|

| [6] |

舒予, 张黎俐, 张雅晴. 科研实体科研绩效的评价及实证研究[J]. 情报杂志, 2017, 36(10): 41-47.

|

|

Shu Yu, Zhang Lili, Zhang Yaqing. Evaluation and empirical research of the research entity's scientific performance[J]. Journal of Intelligence, 2017, 36(10): 41-47.

|

| [7] |

张雪蕾, 魏青山, 尹飞. 构建服务扩展型机构知识库的实践与探索——以西安交通大学为例[J]. 情报理论与实践, 2017, 40(7): 93-98.

|

|

Zhang Xuelei, Wei Qingshan, Yin Fei. The practice and exploration of constructing service-extended institutional repository[J]. Information Studies (Theory & Application), 2017, 40(7): 93-98.

|

| [8] |

介凤, 詹华清, 方向明, 等. 嵌入科研信息管理的高校机构知识库服务实践——以上海大学机构知识库为例[J]. 图书情报工作, 2020, 64(8): 57-63.

|

|

Feng Jie, Zhan Huaqing, Fang Xiangming, et al. Practices of the university IR services to support its research information management - A case study of Shanghai University[J]. Library and Information Service, 2020, 64(8): 57-63.

|

| [9] |

姚晓娜, 祝忠明, 刘巍, 等. 机构知识库在科研评价服务中的应用及实现[J]. 数字图书馆论坛, 2020, 16(6): 22-27.

|

|

Yao Xiaona, Zhu Zhongming, Liu Wei, et al. The application and practice of institutional repository in scientific research evaluation[J]. Digital Library Forum, 2020, 16(6): 22-27.

|

| [10] |

Brei F, Frey J, Meyer LP. Leveraging small language models for Text2SPARQL tasks to improve the resilience of AI assistance[C]//Ceur Workshop Proceedings. Hersonissos, Greece, 2024.

|

| [11] |

Jiang Jinhao, Zhou Kun, Dong Zican, et al. StructGPT: A general framework for large language model to reason over structured data[C]//Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Singapore: Association for Computational Linguistics 2023: 9237-9251.

|

| [12] |

石秀选, 李均. 生成式人工智能技术赋能大学学术评价: 机遇、挑战及应对[J]. 高教探索, 2024(4): 5-13.

|

|

Shi Xiuxuan, Li Jun. Empowering university academic evaluation with generative AI technology: Opportunities, challenges, and responses[J]. Higher Education Exploration, 2024(4): 5-13.

|

| [13] |

吴进, 冯劭华, 庞萍, 等. 高校图书馆应用ChatGPT的前景、法律困境和因应之策[J]. 情报探索, 2024(1): 92-98.

|

|

Wu Jin, Feng Shaohua, Pang Ping, et al. Prospects legal dilemmas and countermeasures of ChatGPT in university libraries[J]. Information Research, 2024(1): 92-98.

|

| [14] |

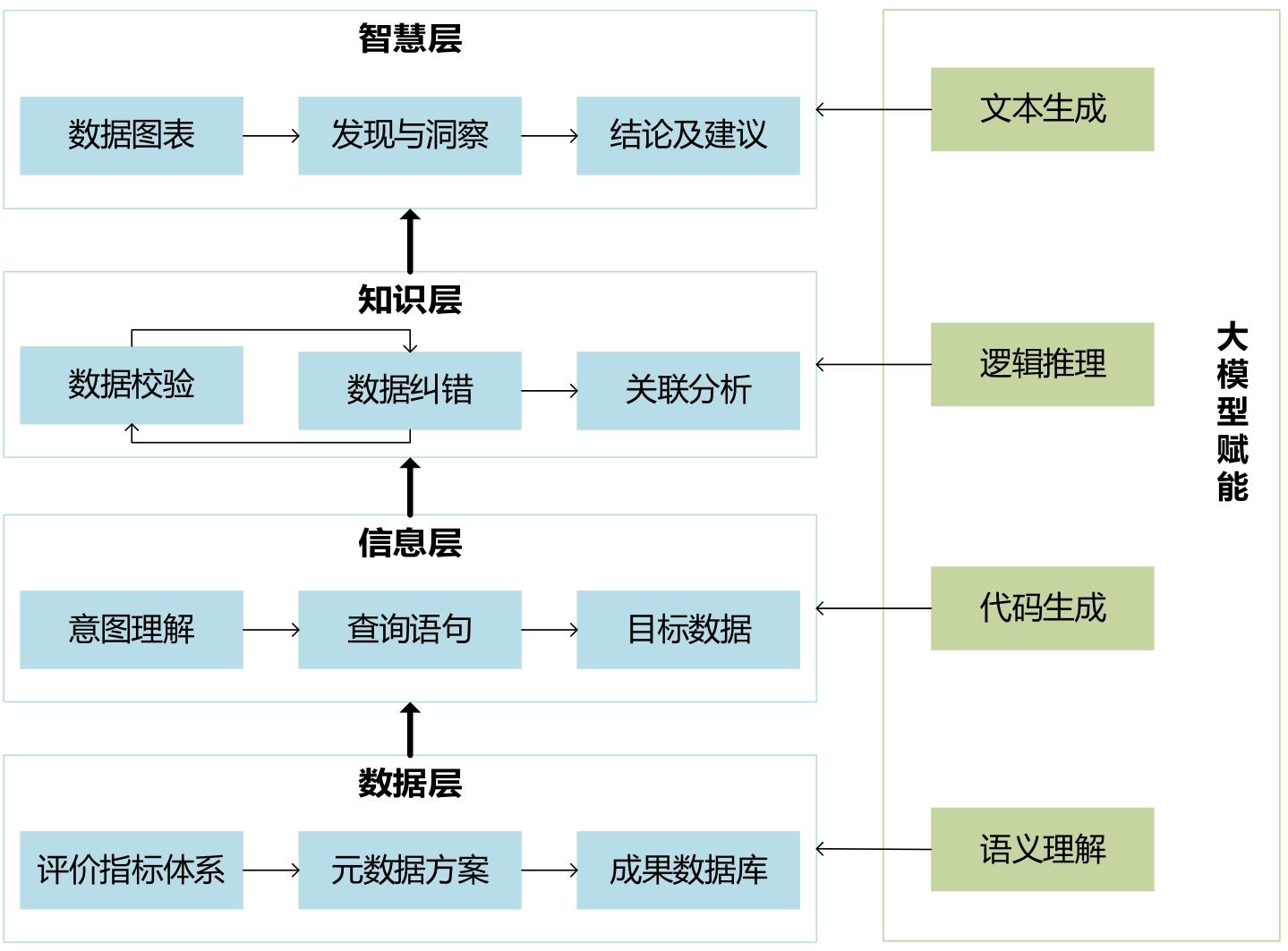

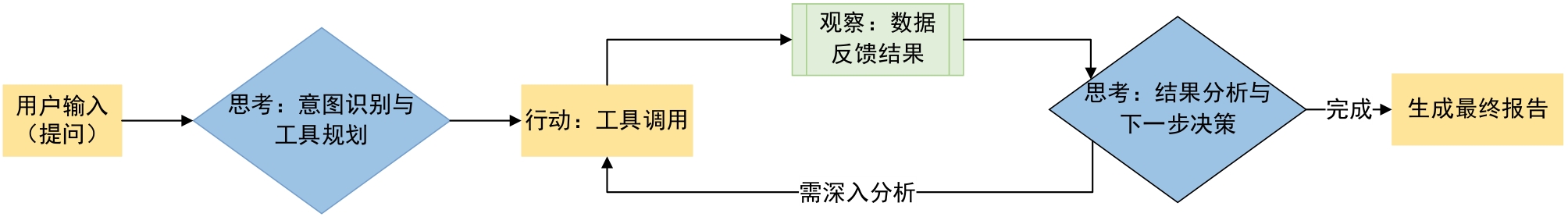

张兴旺, 李洁, 李思凡, 等. DeepSeek赋能图书馆知识服务的理论模型、模式创新与重要启示[J]. 农业图书情报学报, 2025, 37(1): 4-16.

|

|

Zhang Xingwang, Li Jie, Li Sifan, et al. Theoretical model, model innovation, and important implications of DeepSeek empowering library knowledge services[J]. Journal of Library and Information Science in Agriculture, 2025, 37(1): 4-16.

|

| [15] |

商汤科技. 办公小浣熊[EB/OL]. [2025-09-13].

|

| [16] |

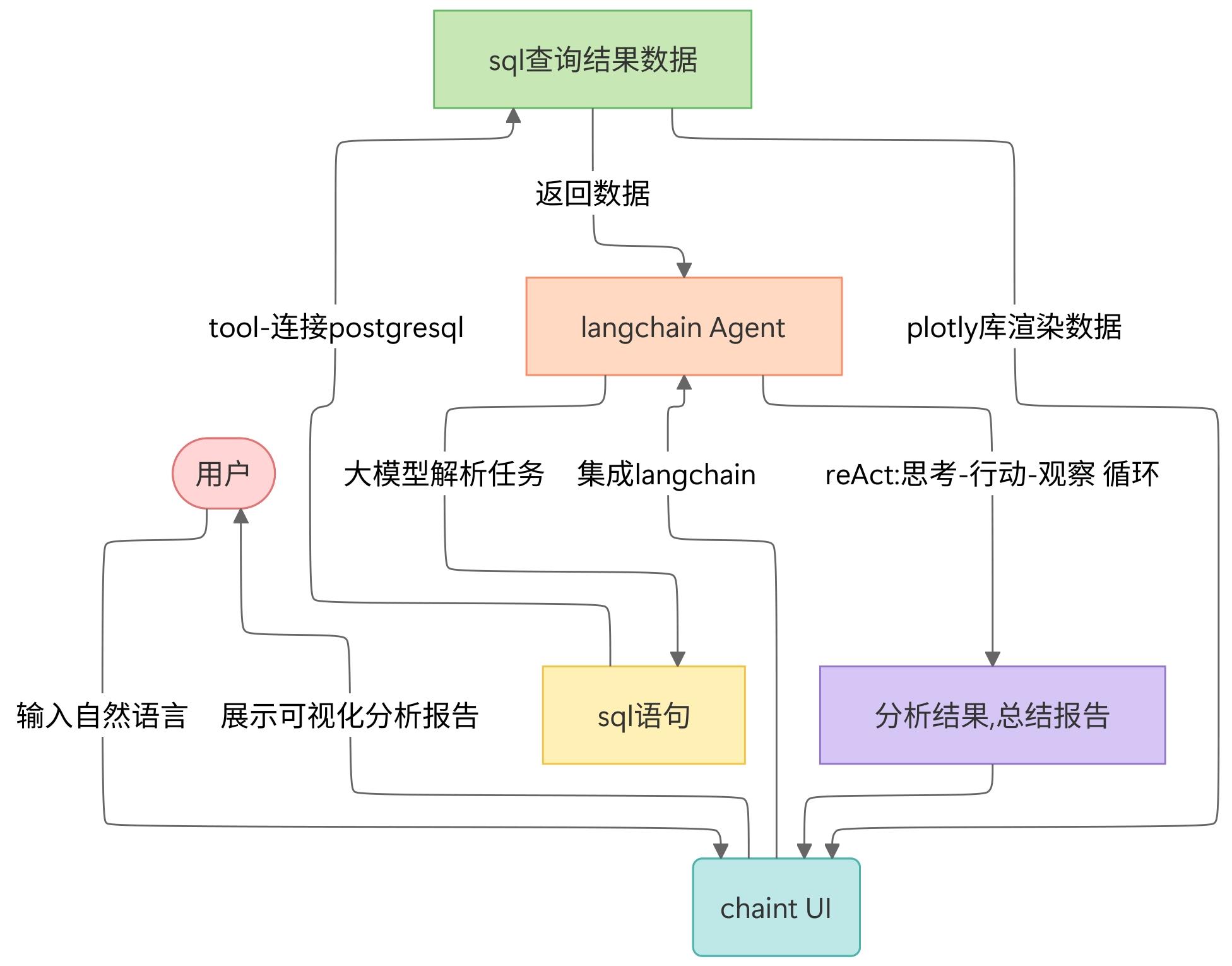

Chainlit. Open source address[EB/OL]. [2025-09-13].

|

| [17] |

Langchain. Official introduction[EB/OL]. [2025-07-22].

|

| [18] |

Ai Moonshot. User manual[EB/OL]. [2025-09-13].

|